When schools ask how to prepare students for an AI-driven world, the answer is rarely “teach them to use AI tools.” C-Academy’s Human Futures Design Thinking programme was built on a different premise: that the most critical skill students need is not technical fluency with AI, but the human capacity to direct it — to ask better questions, challenge assumptions, and design solutions that put people first. This is the foundation of human-centred innovation, and it is what separates meaningful ai implementation from surface-level adoption of emerging technologies.

1. Why AI Alone Is Not Enough: The Problem We Set Out to Solve

The schools C-Academy works with are not short of enthusiasm for AI. Teachers have experimented with generative ai tools. Students have used ai applications to draft essays and generate ideas. What kept emerging in conversations with HODs and curriculum coordinators was a deeper concern: students were becoming better at using AI outputs, but not better at evaluating them.

The gap was not technological. It was human. Students struggled to frame the right problem before turning to AI for answers. They accepted generated outputs uncritically. They found it difficult to empathise with end users when AI could produce a plausible-sounding solution in seconds. What was missing was not more exposure to emerging technology — it was the design thinking process that teaches students to lead with empathy before reaching for any tool.

This is the problem C-Academy set out to address with the Human Futures programme. The starting premise was that ai tools without design thinking skills create a dangerous shortcut: fast answers to the wrong questions. The programme was designed to put the human back at the centre — using EDIT Design Thinking® as the framework that governs how students engage with AI, not the other way around.

“We developed Human Futures after seeing a clear gap in how students were being introduced to AI. Schools were asking about AI readiness, but what was often missing was a human-centred way for students to think about trust, creativity, and responsibility. Human Futures was our response to that need.”

2. What the Human Futures Programme Actually Looks Like

Human Futures is a 6-session ai design thinking programme for secondary school students. It is not an AI literacy module. It is a design thinking programme in which AI serves as one of several tools — alongside physical prototyping, empathetic research, and ideation exercises — within a structured, experiential learning journey.

Below is the standard 6-session learning journey.

Session 1 — Introduction to Design Thinking and Human Futures: Students are introduced to EDIT Design Thinking® and the fundamentals of human-centred innovation in an AI-shaped world. They explore the key stages of Empathise, Define, Ideate, and Test while experiencing AI first-hand — interacting with a generative ai tool, comparing AI output with their own thinking, and discussing when AI helps versus when it replaces critical thought. Through guided activities, students begin identifying real tensions between convenience, originality, trust, responsibility, and human connection, and start framing early opportunity areas for their Human Futures project.

Session 2 — The AI Futures Walk: Students conduct a structured observation activity to discover how AI and digital technologies operate in their daily environment. Using C-Academy’s Future Signals framework, they document where AI appears in school systems, apps, public services, and social interactions — identifying patterns, tensions, and opportunities that others overlook. Students use AI to help analyse and categorise their observation data, then compare AI-generated insights with their own human-generated findings. This builds a foundational understanding of algorithmic reasoning and its limitations — a core element of social and systems intelligence.

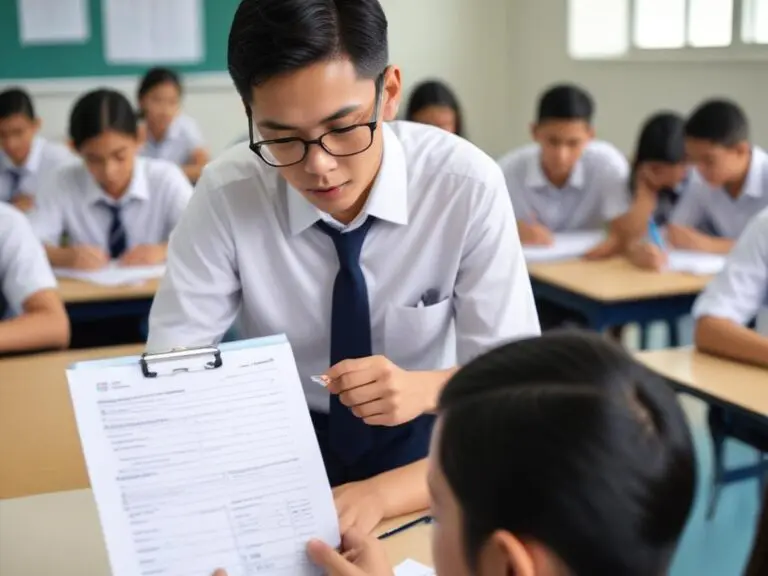

Session 3 — Empathise and Define: What Do People Actually Need?: Students identify a target group — peers, younger students, teachers, or families — and explore their needs, concerns, and behaviours in relation to AI, digital life, originality, trust, or future readiness. Using interviews, observations, empathy maps, and insight clustering, students turn what they have learned into clearer opportunity statements and focused “How might we” questions. They also prompt an AI chatbot to generate empathy insights and compare results with their real interview data — discovering what AI can understand about human feelings and what it fundamentally misses. This is where problem-solving begins in earnest: not with solutions, but with people.

Session 4 — Ideate Human + AI Solutions: Students generate a wide range of possible solutions using C-Academy’s structured brainstorming and ideation tools, including Random Cards and Idea Dice. They also use AI as a brainstorming partner, then critically evaluate AI-generated suggestions against their own ideas. Students compare, refine, and select ideas based on desirability, feasibility, responsibility, and potential impact. Each team develops an AI + Human Design Brief — C-Academy’s signature deliverable for this theme — that clearly defines what AI should do, what humans should do, and why. This is hands-on learning at its most practical: students are not theorising about ai strategies for innovation, they are practising them.

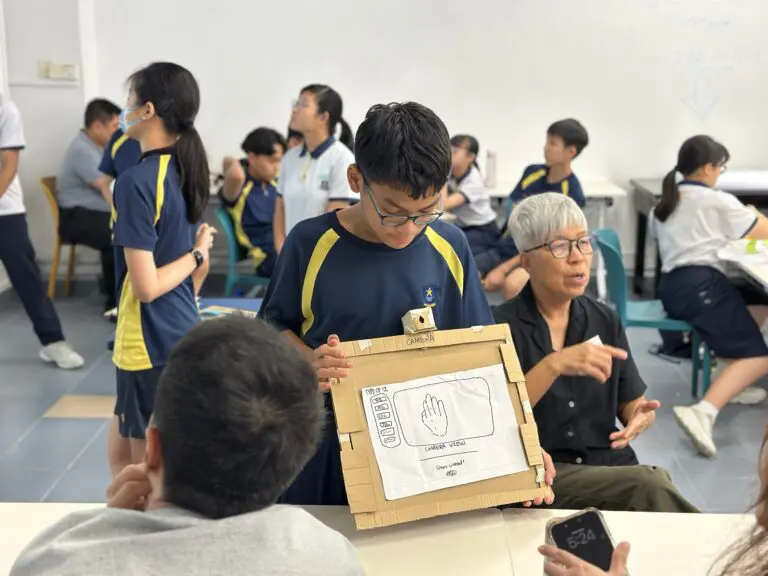

Session 5 — Make It Real but Keep It Human: Students turn their selected ideas into quick, tangible prototypes — storyboards, mockups, posters, role plays, mini campaigns, or low-fidelity digital concepts. rapid prototyping is used deliberately here: the goal is not a polished product but a testable idea. Students gather feedback on clarity, usefulness, originality, and responsible AI use, then refine their concepts and strengthen their AI + Human Design Brief.

Session 6 — Showcase and Reflection of Our Human Futures: Students present their final Human Futures concepts through a team showcase. They explain the problem they explored, the users they considered, the insights they uncovered, and how their solution responds to a real human need in an AI-shaped world — with their AI + Human Design Brief as a centrepiece. Students also reflect on what they learned about empathy, critical thinking, collaboration, responsible creativity, and how their own relationship with AI has changed through the programme.

3. Where Design Thinking and AI Intersect in Practice

The most productive intersection — and the one that surprised the C-Academy facilitation team most — emerged in the Define phase.

Students who had grown up with search engines and ai tools had a strong default: retrieve an answer, accept it, move on. The EDIT Design Thinking® methodology requires the opposite. Before any solution is considered, students must arrive at a well-framed “How Might We” statement. This cannot be outsourced to AI because it requires a human judgement about what matters and who is affected. It is the kind of reasoning that anthropologists and ethnographers practise — observing human behaviour closely before drawing any conclusions.

What the facilitation team found was that introducing AI during the Define phase — asking students to test their HMW statements against AI-generated problem framings — created productive friction. Students discovered that AI would often reframe their problem in a way that was technically coherent but missed the emotional or social dimension they had observed in the Learning Journey.

Consider a student who frames the problem as: “How might we help elderly residents in our community feel less anxious about using AI-powered services?” When the same prompt is fed to an AI chatbot, it tends to return a reframing focused on interface simplicity or feature reduction — technically reasonable, but stripped of the emotional texture the student had gathered through direct observation. The student’s version reflects genuine empathy; the AI’s version reflects pattern-matching. Noticing that difference is itself a learning outcome.

This friction became a teaching moment about what human-centred design means. It is not anti-technology. It is the capacity to use technology with intention — knowing when to trust an AI output and when to override it. It is also where systems thinking enters: students begin to see AI not as a neutral tool but as a system with its own assumptions, trained on particular data, optimised for particular outcomes.

In the Ideation phase, a similar dynamic emerged. AI could generate many ideas quickly. But students trained in divergent thinking using Random Cards consistently produced ideas that were more unexpected and more empathy-driven than AI-assisted brainstorms alone. The combination — human intuition expanded by AI breadth — produced stronger concept sets than either approach independently. This is the creative process working as it should: iterative learning, not one-shot generation.

4. What Students Typically Discover

Across design thinking programmes that integrate AI as a thinking tool, a consistent pattern emerges: students begin as enthusiastic AI users and, through structured reflection, become more discerning ones.

The shift happens gradually. In early sessions, students tend to accept AI-generated outputs at face value — the responses are fluent, confident, and fast. By the midpoint of the programme, after conducting real empathy interviews and building their own problem frames, students begin to notice the gap between what AI produces and what they actually observed. The AI’s synthetic personas feel thin compared to the real people they spoke with. The AI’s problem framings feel generic compared to the specific tensions they uncovered.

By the Prototyping phase, students working through the design thinking process are regularly questioning AI-generated suggestions by asking a question the EDIT Design Thinking® framework has trained them to ask: *who is this actually for?* That shift — from passive AI user to active design thinker interrogating AI — is the clearest indicator that ethical design thinking is taking hold.

This is also where the programme’s relevance to real-world challenges becomes most visible. The students are not preparing for a test. They are practising the same reasoning that business strategists, innovation leaders, and product teams use when evaluating whether an AI application genuinely serves human needs or simply automates an existing assumption. The difference between those two outcomes is the difference between meaningful systems design and efficient mediocrity.

5. What We Would Do Differently

No programme is designed perfectly on the first iteration. C-Academy’s facilitation team identified several things they would adjust in subsequent runs of the Human Futures programme.

The Learning Journey needs a tighter brief. In early runs, students arrived at the empathy mapping session with broad observations but not enough specificity about the humans in the AI system. Adding a structured observation prompt — directing students to document specific moments of human-AI friction — produced richer empathy maps in later sessions. This is a lesson that applies broadly to experiential learning design: the quality of the output depends heavily on the quality of the brief.

AI tool selection matters more than expected. Not all generative tools are equal for educational use. Tools that showed their reasoning or surfaced uncertainty helped students evaluate outputs critically. Tools that produced authoritative-sounding answers without qualification had the opposite effect — reinforcing the passive acceptance the programme was designed to challenge. For schools and industry professionals designing similar programmes, this is a practical consideration worth building into the curriculum from the start.

More time is needed on the Define-to-Ideate transition. This is the most cognitively demanding moment in the programme: students move from a deeply human, empathy-grounded problem frame into a divergent ideation space. Rushing this transition produced ideas that were technically interesting but emotionally thin. Slowing it down — and using iterative learning checkpoints to ensure students stayed connected to their empathy insights — produced stronger, more human-centred concepts.

6. What This Means for Schools Planning AI-Integrated Programmes

The Human Futures programme offers a practical model for schools thinking about how to integrate AI into existing design thinking or applied learning frameworks — not as a standalone AI literacy module, but as a dimension of human-centred intelligence that students develop through real-world challenges.

The core design principle is sequence: human insight before AI input. When students understand the problem from a human perspective first, they use AI more effectively, more critically, and more ethically. When AI comes first, it short-circuits the empathy and problem-framing that makes any solution meaningful. This is not a theoretical position — it is what the design thinking process, applied consistently, demonstrates in practice.

For HODs and curriculum planners, the implication is structural. AI integration is not a content addition — it does not mean adding a session on “how to use ChatGPT.” It means designing the conditions in which students engage with AI as a thinking partner, not an answer machine. EDIT Design Thinking® provides that structural scaffold. The question — “whose problem is this, and what do they actually need?” — is asked before any tool is picked up.

The skills students build through this process — empathy, problem-framing, ethical reasoning, iterative prototyping — are the same innovation capabilities that community care organisations, entrepreneurial initiatives, and transformation projects require from the people who lead them. Schools that develop these capabilities are not just preparing students for AI. They are preparing students to shape it.

Schools considering a similar programme can explore how C-Academy’s [design thinking workshop](https://c-academy.org/let-out-your-creativity/) framework adapts to AI-integrated themes. The methodology is consistent; the contextual layer — in this case, AI and its human implications — becomes the real-world domain students design within.