Post-exam periods in Singapore secondary schools are rarely wasted — but they are frequently under-used. Design thinking, when structured well, turns these weeks into some of the most meaningful learning time in the school year. When structured poorly, it becomes just another activity students sit through.

1. Why Post-Exam Periods Are a Missed Opportunity in Most Schools

For most students, the end of examinations signals relief. For many schools, it signals a logistical challenge: how do you keep students engaged, justify curriculum time, and deliver something that holds up to scrutiny — all within a compressed window?

The default answer is often a mix of movie screenings, games days, and loosely organised talks. These are not without value, but they rarely connect to the school’s broader learning objectives. What gets lost is the opportunity to use freed-up time — when students are relaxed, less performance-anxious, and more open to taking risks — to build exactly the competencies that exam formats cannot assess.

21st century competencies such as adaptive thinking, collaboration, and communication skills develop through doing, not passive reception. Post-exam periods, stripped of assessment pressure, offer a rare learning experience where students can fail safely, iterate, and own their learning. Experiential learning in this window is qualitatively different from what is possible during an academic term under assessment pressure — and most schools don’t capitalise on it.

Experienced design thinking facilitators consistently observe that when students enter a design thinking workshop during post-exam periods, there is a visible shift in energy. The absence of academic stakes creates space for creative confidence to emerge. Students who are guarded during term time become more willing to share ideas, challenge assumptions, and take real ownership of a design challenge. The post-exam window provides, in many ways, ideal conditions for human-centered design to take root.

C-Academy has delivered design thinking workshops across 6 Singapore secondary schools, as well as other workshops for participants outside of secondary schools. The consistent observation from facilitators is that student engagement during post-exam periods is often higher than during term time — not because the content is easier, but because the stakes feel lower and curiosity takes over.

2. What Makes Design Thinking a Strong Fit for Post-Exam Enrichment

Not every enrichment format suits a post-exam window. Activities that require months of preparation, sustained academic effort, or complex coordination across departments are hard to execute well in two to three weeks. A design thinking workshop is different.

A well-facilitated design thinking workshop is self-contained, experiential, and scalable. It does not require prior knowledge from students. It produces visible outputs — empathy maps, How Might We statements, user personas, rapid prototypes, and pitch presentations — that give students a clear sense of progression and completion. And it directly builds the 21CC skills Singapore schools are accountable for developing through their applied learning programme commitments.

C-Academy’s EDIT Design Thinking® methodology — Empathise, Define, Ideate, Test — is structured to work within compressed timelines without losing depth. Whether the theme is a sustainability challenge, a cyber wellness issue, or a community design challenge, the design thinking methodology adapts to the school’s context and student profile. Schools choose the angle most relevant to their CCE curriculum and student development programmes. The structured ideation and problem framing tools work regardless of theme.

What also makes design thinking effective in this window is its social dimension. Students work in small groups, navigate disagreement, divide responsibilities, and present together. These are precisely the collaboration skills and presentation skills that rarely receive explicit development time during the academic year. For many students, the post-exam design thinking workshop becomes one of the most memorable educational experiences of the year — not because it was easy, but because it was genuinely theirs.

3. The 21st Century Competencies a Post-Exam Design Thinking Programme Develops

Singapore’s CCE curriculum and MOE’s 21CC framework identify a set of competencies that go beyond academic content: critical thinking, civic literacy, cross-cultural skills, communication skills, and collaborative problem solving. A well-designed post-exam design thinking workshop addresses all of these directly.

Critical thinking and analytical thinking are built through the Define phase, where students synthesise empathy data, identify patterns, and construct well-reasoned problem framing — not just accept the first explanation that comes to mind. Logical thinking is developed as students evaluate their ideas against real constraints: is this desirable, is it feasible, does it genuinely address the user’s need?

Civic literacy emerges when the design challenge is anchored in a real community context — a sustainability challenge, a public service gap, or a challenge affecting people in their immediate environment. Students engage with real-world problems rather than hypothetical ones, which also supports the cross-curricular projects that many ALP providers are asked to deliver.

Creative problem solving and creative confidence are developed through structured ideation — particularly when facilitated with tools like C-Academy’s Random Cards and Idea Dice, which are specifically designed to break habitual thinking patterns. Technology literacy can be woven in when relevant, for instance in challenge sprints involving digital tools or scientific exploration.

Holistic development — encompassing character development, student well-being, and independent learning — is supported by the student-centred learning structure of design thinking. Students set their own direction within a scaffold, build on each other’s ideas, develop practical skills that transfer beyond the workshop, and practise goal setting in a real project context.

4. The Common Mistakes Schools Make When Running Post-Exam Design Thinking

Schools that have had mixed results with design thinking enrichment usually share a handful of common patterns. Recognising them early saves significant time and budget.

Treating it as a filler block. When design thinking is scheduled without clear learning objectives, no defined student outcomes, and no connection to the school’s ALP objectives, facilitators have nothing meaningful to anchor the experience. Students sense this quickly. The workshop becomes a series of activities rather than structured learning journeys with genuine curriculum alignment.

Compressing too aggressively. A single-day design thinking taster can spark interest, but it rarely builds design thinking competence. Schools sometimes try to run a full programme in half the time, cutting the empathise and define phases to get to ideation faster. This produces ideas without insight — students generate solutions to real-world problems they haven’t genuinely understood. Problem framing cannot be rushed without undermining everything that follows.

Mismatching the group. Design thinking works across ability levels, but student-centred learning requires facilitation calibrated to the cohort. A group of Secondary 1 students working through their first exposure to empathy interviews needs a different approach from Secondary 4 students revisiting design thinking skills. When programme design doesn’t account for the actual cohort, student buy-in drops.

No follow-through after the workshop. Post-exam enrichment that ends with a pitch presentation and nothing else loses much of its developmental value. Measurable outcomes — including a structured post workshop report and formative assessment data — require deliberate documentation. Schools that build this in see stronger competency growth and more defensible ALP reporting.

A common pattern that emerges in intake conversations is schools that ran a design thinking taster in a previous year and found it “didn’t quite land.” In most cases, the issue was not the design thinking methodology itself — it was insufficient programme design: no clear design challenge brief, facilitator credentials that didn’t include structured ideation experience, or a session breakdown that skipped problem framing entirely.

5. What a Well-Structured Post-Exam Design Thinking Programme Looks Like

C-Academy’s design thinking programmes typically run across four to six sessions, adaptable to each school’s post-exam activities timeline. Even a condensed full-day challenge sprint format is possible for schools with very compressed windows. The design thinking skills students develop are equivalent; the structure adapts to the school’s needs.

Session Breakdown: What Happens at Each Stage

Session 1, Introduction to Design Thinking: Students are introduced to EDIT Design Thinking® through structured, hands-on activities. They learn the stages from Empathise to Test, begin mapping users and systems related to the chosen design challenge, and frame early “How might we” questions to guide initial solution concepts. This session builds the foundation for the design thinking skills students will apply throughout the programme.

Session 2, Learning Journey: Students go on a thematic learning journey to observe how the challenge they are exploring plays out in a real setting. They engage with stakeholders, identify practical enablers and gaps, and gather first-hand insights that ground the rest of the programme in real-world problems rather than assumptions.

Session 3, Empathise and Define: Back in the workshop, students map key observations, identify pain points, and capture insights in empathy maps. They develop user personas and craft focused “How might we” problem framing statements for their chosen challenge. This is the phase most often rushed, and the most important to protect for genuine design thinking competence development.

Session 4, Ideate — Divergence and Convergence: Using structured ideation tools including C-Academy’s Random Cards and Idea Dice, students generate a wide range of ideas through divergent thinking, then cluster and evaluate them through convergent thinking, selecting strong concepts to develop further. The deliberate pairing of divergent and convergent structured ideation is what separates this from unstructured brainstorming.

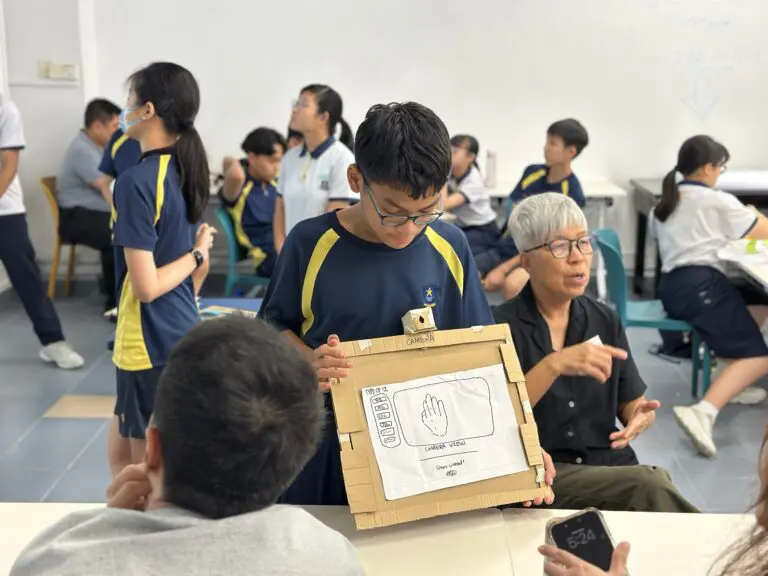

Session 5, Rapid Prototyping and User Testing: Teams turn selected concepts into quick, tangible prototypes — posters, mock layouts, role plays, or low-fidelity models. They test these with peers and teachers, gather feedback, and refine their solutions. The emphasis is on iterative learning through user testing, not producing a polished product.

Session 6, Final Presentation: Students share their final concepts and design journey with school leaders, teachers, and classmates. The emphasis is not just on the quality of the idea or the finish of the prototype — it is on how clearly students can articulate their understanding of the entire design thinking process: the empathy work, the problem framing, the ideation choices, and what they learned from user testing.

What a post-exam format uniquely enables is time and mental space. In term-time workshops held after school hours, C-Academy has observed students at Methodist Girls’ School (Secondary) generating over 56 design thinking ideas and building 41 physical prototypes in a single programme — under time pressure, after a full school day. In a post-exam enrichment programme, with more time, lower stress, and students who are mentally rested, the conditions for creative output and competency growth are even more favourable.

6. How to Choose the Right ALP Provider for Post-Exam Delivery

Not all ALP providers are equally suited to post-exam enrichment delivery. When evaluating providers, HODs and curriculum planners should look beyond brochure claims and assess a few practical criteria.

Facilitator credentials matter. Core facilitators should have direct experience with the design thinking methodology — not just theoretical familiarity, but demonstrated practice in facilitation, structured ideation, and competency assessment of student outcomes. Ask to see each facilitator’s background, not just the organisation’s portfolio.

Programme design flexibility is essential. A provider that offers only one fixed format may not suit a school with a three-day post-exam window or a two-week enrichment block. C-Academy programmes have been delivered in formats ranging from four to six full sessions through to condensed full-day challenge sprint formats. The design thinking skills developed are equivalent; the structure adapts.

Curriculum alignment and measurable outcomes should be built in from the outset. The best ALP providers will connect the programme’s learning objectives to the school’s CCE curriculum commitments and ALP objectives before the programme begins — not as an afterthought. They will also provide a post workshop report documenting competency assessment data that HODs can use for ALP reporting and teacher professional development planning.

Teacher professional development is worth asking about. Some schools use post-exam enrichment as an opportunity to expose teachers to design thinking facilitation — as co-facilitators or observers. This supports longer-term curriculum alignment and independent learning beyond the enrichment programme.

7. How to Evaluate Whether Your Post-Exam Programme Actually Worked

HODs and teachers often ask a reasonable question after any enrichment programme: how do we know it made a difference?

C-Academy’s competency assessment process is structured as follows. Before the programme begins, students complete a pre-workshop evaluation covering their understanding of the design thinking process and their confidence across core design thinking skills. During the final session, mentor facilitators assess each group’s presentation — not just on the quality of their ideas or the finish of their prototypes, but on their demonstrated understanding of the full EDIT Design Thinking® journey: how they empathised, how they framed the problem, how they used structured ideation to generate and refine solutions, and what they learned from user testing. Feedback is given to each group directly, making the final session an active learning moment rather than a passive showcase.

After the programme, students complete a post workshop evaluation covering the same competency dimensions as the pre-workshop baseline. Comparing pre- and post-workshop data gives schools a direct measure of competency growth — and gives students a concrete record of their own skill development. Across C-Academy programmes, the average improvement in design thinking competence is 37%. At Sembawang Secondary School, the improvement was 56% — from 13.5% to 69.5% in overall competence scores, assessed independently of the facilitation team.

Beyond quantitative measures, schools can look for qualitative signals: Do students reference the design thinking methodology when explaining how they approach problems? Do teachers observe stronger collaboration skills and analytical thinking in subsequent group work? Can students articulate their own competency growth in their own words?

The clearest sign that a post-exam design thinking workshop worked is not what students produce during the session — it is the measurable outcomes they carry out of it, and how clearly those outcomes map to the learning objectives the school set at the outset.

8. Connecting Post-Exam Design Thinking to Your School’s Broader Strategy

Post-exam design thinking enrichment does not have to be a standalone event. With the right programme design, it becomes part of a school’s broader student-centred learning strategy — one that connects to CCE curriculum priorities, ALP objectives, goal setting across student development programmes, and the 21st century competencies framework MOE has set out.

Schools that use post-exam periods most effectively treat them not as a wind-down but as a concentrated opportunity for the kind of applied, cross-curricular projects that term-time timetables rarely allow. A sustainability challenge that draws on Geography and Science. A civic literacy design challenge that connects to CCE units on active citizenship. A challenge sprint built around community care — the kind of real-world problem that produces genuine empathy and lasting student outcomes.

When these programmes are well-documented — with pre and post competency assessment data, a structured post workshop report, and clear curriculum alignment to ALP and CCE goals — they become evidence of holistic development that strengthens the school’s ALP reporting, supports teacher professional development planning, and gives students a meaningful activity that stays with them long after the exams are forgotten.