Preparing students for an AI-shaped world is not primarily a technology problem — it is a human problem. C-Academy’s Human Futures programme was built on that premise, and everything we learned delivering it reinforced why design thinking is not a complement to AI education: it is the foundation.

—

1. Why AI Alone Is Not Enough: The Case for Human-Centred Thinking

When conversations about AI in education dominate, the instinct is to focus on tools: how to use ChatGPT, how to prompt, how to work alongside generative AI systems. These are useful skills. But they answer the wrong question.

The more important question is: what should humans be doing that AI cannot? Answering it requires students to develop capacities that no amount of ai tools training builds — empathy, problem-framing, ethical design reasoning, and the ability to sit with ambiguity.

This is where design thinking enters. C-Academy’s EDIT Design Thinking® methodology — Empathise, Define, Ideate, Test — is structured precisely around the human capacities that remain irreplaceable in an AI-augmented world. Empathy cannot be automated. Defining the right problem, rather than just solving the presented one, is a distinctly human skill. And the creative confidence to generate and test unconventional ideas is something students must practise, not download.

Design thinking is not just a set of tools and techniques; it is a way of understanding people first. In Human Futures, we apply this principle to one of the most important questions students face today: how should AI be designed, used, and governed to serve people well? We focus on learners, their growth, and the meaningful connections they build through empathy, collaboration, and real-world problem solving — empowering students to become active shapers of technology, not passive consumers of it.

Kimming Yap, Co-Founder of C-Academy, puts it directly: “Generative AI is extraordinarily good at producing outputs. What it cannot do is tell you whether you are solving the right problem for the right person. That is a human-centred intelligence question — and it is what we train students to ask.” This perspective shapes every dimension of the Human Futures programme, from how facilitators frame activities to how outcomes are measured.

What C-Academy observed early in programme development was that students who had strong AI tool familiarity but no structured problem-solving framework struggled to use those tools purposefully. They could generate outputs — but they could not evaluate whether those outputs addressed the actual human need at stake. EDIT Design Thinking® gave them that structure. It is, in the truest sense, the design thinking process that makes AI strategies for innovation actionable rather than theoretical.

—

2. How the Human Futures Programme Came Together

The Human Futures programme did not arrive fully formed. It was built through iterative learning — shaped by what worked in the room, what fell flat, and what students said when asked directly what felt meaningful.

As AI reshapes how students learn, create, and communicate, they need more than technical skills. They need to understand people, question what they see, and design solutions where technology serves humanity — not the other way around. This is where the AI design thinking workshop in Singapore comes into play, providing an opportunity for students to engage with these concepts deeply. C-Academy recognised this gap and built the Human Futures programme in direct response: a structured curriculum that goes beyond AI literacy to address the harder question of what it means to be human in an AI-shaped world.

The design challenge C-Academy faced in building the curriculum was not dissimilar to the challenge students face inside it: how do you define the right problem? Early versions of the programme leaned heavily on the “AI literacy” framing — helping students understand how AI systems work, what they can and cannot do, where bias enters. That content is valuable. But facilitation teams quickly noticed that students engaged more deeply when the focus shifted from understanding AI to designing human responses to AI.

The shift sounds subtle. In practice, it changed everything. Instead of asking “how does a recommendation algorithm work?”, sessions began asking “whose interests does a recommendation algorithm serve — and who gets left out?” That reframe moved students from passive recipients of information to active designers of futures — a core goal of experiential learning at its most effective.

The Learning Journey component — C-Academy’s signature stage in which students engage directly with a real-world context before any design work begins — became particularly important in this programme. Encountering real people whose lives are being shaped by AI decisions (in hiring, in healthcare, in community care settings) gave students something abstract systems thinking alone never could: felt stakes. It is here that empathetic research — structured observation and listening before any designing begins — lays the foundation for everything that follows.

—

3. What EDIT Design Thinking® Looks Like When AI Is the Context

Running EDIT Design Thinking® with AI as the design context introduced facilitation challenges that the team had not fully anticipated. These are not challenges unique to C-Academy — they reflect the broader difficulty of applying a human-centred design process to problems shaped by social and systems intelligence that is often opaque, fast-moving, and poorly understood even by the adults in the room.

The standard Human Futures learning journey runs across six sessions of approximately 18 hours in total, accommodating up to 50 participants. The arc moves students deliberately through the full EDIT cycle in an AI context:

Session 1 introduces EDIT Design Thinking® and the fundamentals of human-centred innovation. Students experience AI first-hand — interacting with generative AI tools, comparing AI output with their own thinking, and beginning to identify real tensions between convenience, originality, trust, and human connection.

Session 2 takes students on an AI Futures Walk — a structured observation activity using C-Academy’s Future Signals framework to document where AI appears in school systems, apps, and social interactions. Students use AI to help analyse their data, then compare AI-generated insights with their own findings.

Session 3 moves into deep empathy work. Students identify a target group — peers, younger students, teachers, or families — and explore their needs and concerns around AI using interviews, observations, and empathy maps. They also prompt an AI chatbot to generate empathy insights, then compare results with their real interview data, surfacing what AI can and cannot understand about human feeling.

Session 4 focuses on ideation. Students generate human + AI solutions using Random Cards and Idea Dice alongside AI as a brainstorming partner — then critically evaluate AI-generated suggestions against their own. Each team develops an AI + Human Design Brief, C-Academy’s signature deliverable, specifying what AI should do, what humans should do, and why.

Session 5 is prototyping: storyboards, mockups, role plays, and low-fidelity concepts tested with peers and teachers, refined based on feedback.

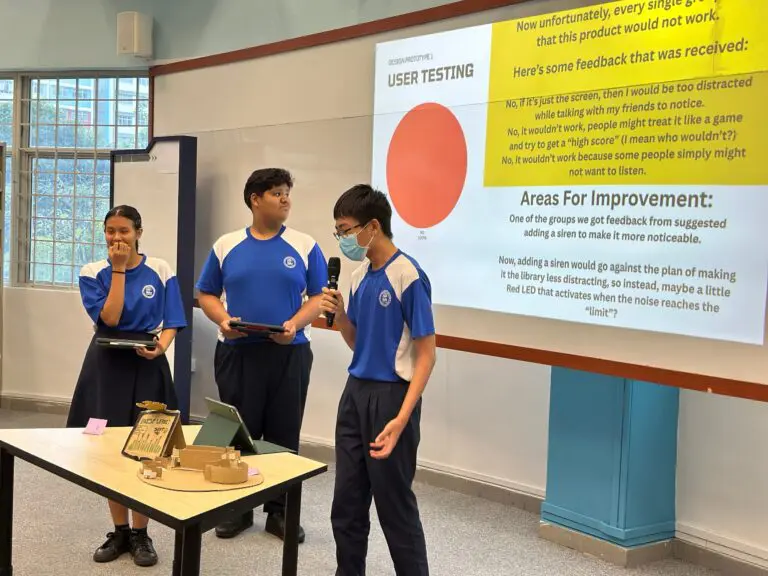

Session 6 is the showcase — students present their Human Futures concepts to school leaders and peers, with their AI + Human Design Brief as the centrepiece, followed by structured reflection on how their relationship with AI has changed through the programme.

The Empathise phase, in particular, required new scaffolding across all deliveries. When the “user” is someone navigating an AI-mediated system — a job applicant screened by an algorithm, a student receiving AI-generated feedback — the empathy work is more complex. Students needed help distinguishing between what a user experiences and what a system produces. Facilitators found that slowing this phase down, and building in structured observation tasks before any interviewing, made a significant difference. This is empathetic research in practice: attending carefully to lived experience before rushing to solutions.

The Define phase surfaced a recurring challenge: students wanted to fix AI. How Might We statements kept drifting towards technical solutions (“how might we make the algorithm fairer?”) rather than human-centred ones (“how might we help job seekers understand how they are being evaluated, so they can advocate for themselves?”). Redirecting that impulse — without dismissing the technical instinct — became a core facilitation skill the team developed through delivery. Business strategists and innovation leaders who have run transformation projects in organisations will recognise this pattern immediately: the pull towards ai implementation as the answer, before the human problem has been properly defined.

In the Ideate phase, C-Academy’s Random Cards and Idea Dice tools proved unexpectedly effective. When students were stuck generating ideas about AI-related challenges, the structured randomness of these tools broke the impasse. The creative process of combining random stimuli with focused challenge framing consistently unlocked directions that neither pure brainstorming nor AI-generated suggestions surfaced on their own.

The Test phase raised the question that most directly challenged students: who do you test with, and how do you reach them? Rapid prototyping solutions to AI-related human problems often required reaching users outside the school environment — which pushed students to think seriously about access, representation, and whose feedback they were prioritising. These are real-world challenges that product teams and innovation capabilities in professional settings grapple with every day.

—

4. What Students Actually Struggled With (And What That Taught Us)

The struggle points were as instructive as the successes.

Sitting with uncertainty. Students arriving with high academic achievement, in particular, found the open-ended nature of EDIT Design Thinking® genuinely uncomfortable. There is no right answer in the Empathise phase. There is no correct How Might We statement. That ambiguity, for students conditioned to optimise for marks, required active facilitation to normalise. Industry professionals who have led design studio courses or interdisciplinary tracks in tertiary settings will recognise this as one of the most consistent barriers to genuine design learning.

Separating the problem from the solution. Across cohorts, a large majority of students arrived in the Define phase already mentally committed to a solution — often a technological one. Facilitators observed this pattern consistently: students heard “AI” in the challenge brief and immediately began designing ai applications, bypassing the problem-finding work entirely. Helping them hold the problem open long enough to define it well is one of the most consistently difficult facilitation challenges in any design thinking programme — and it was amplified in the AI context, where students often assumed the solution was inherently technical. Anthropologists and researchers in human-centered design fields call this “solution-first thinking,” and countering it is central to what design thinking process training accomplishes.

Giving and receiving feedback on ideas. The Testing phase requires students to present incomplete, imperfect prototypes and invite critique. For many, this felt exposing. C-Academy facilitators introduced structured feedback protocols — separating observations, interpretations, and evaluations — which gave students a safe container for the process. Hands-on learning is only as effective as the psychological safety built around it.

Each of these struggle points informed adjustments to the programme. Difficulties were not problems to eliminate; they were design data. This is iterative learning in action — and it models for students the very mindset the programme is designed to build.

—

5. The Outcomes That Surprised Us Most

C-Academy measures programme outcomes through pre- and post-delivery competency assessments, conducted independently of the facilitation team. Across all C-Academy secondary school programmes, the average improvement in design thinking competence is 37 percentage points — assessed across 300+ students. Separately, 78% of students reported stronger empathy after completing a programme, up from approximately 44% before. And 84% felt confident solving problems without clear answers, up from approximately 65%.

These numbers reflect what meaningful systems design looks like at the curriculum level: not activity completion, but measurable shifts in how students think.

Beyond the quantitative data, several qualitative outcomes stood out.

Students who completed the programme were more likely to ask “who is this for?” when evaluating AI-generated content — a question that indicates a shift from passive consumption to active, human-centred scrutiny. That is a more durable outcome than AI tool familiarity, which dates quickly. It reflects the development of human-centred innovation as a genuine competency, not merely a workshop activity.

Facilitators also noticed a change in how students talked about their own futures. Before the programme, conversations about AI tended to be fatalistic — “AI will take jobs”, “humans won’t be needed.” After working through EDIT Design Thinking® in the AI context, students spoke differently: about the roles they wanted to play, the problems they wanted to solve, the humans they wanted to serve.

—

6. What This Means for Schools Planning AI-Integrated Programmes

If you are a Head of Department or curriculum leader designing an AI-integrated programme, the Human Futures experience points to several practical considerations that cut across ai degree programme structures, design studio courses, and interdisciplinary tracks alike.

Start with the human problem, not the technology. The most effective AI education does not begin with AI. It begins with a real human challenge — in the school’s community, in students’ lives — and then asks how AI intersects with it. Economic perspectives on AI tend to frame the question in terms of jobs and displacement. That framing, while real, misses the more actionable question: what human capacities do we want students to take into an AI-shaped economy? Entrepreneurial initiatives, community care roles, and emerging technology fields all reward the same underlying competencies: empathy, systems thinking, and creative problem-solving.

Design thinking is the infrastructure. Without a structured framework for problem-finding, idea generation, and iterative testing, AI literacy activities produce surface engagement. EDIT Design Thinking® gives students the process to move from curiosity to meaningful action. Emerging technologies change rapidly; the design thinking process does not. That is what makes it a durable investment for schools.

Measure what changes in students, not just what they produce. The Human Futures Learning Outcomes framework captures this directly. Through the programme, students learn to think critically about AI — questioning assumptions, evaluating AI-generated content for bias and accuracy, and developing the judgement to know when AI helps and when it replaces thinking. They build human-centred empathy through structured listening and observation. They design responsibly, creating AI + Human Design Briefs that specify what technology should do and what people should do. They develop confidence navigating misinformation, digital trust, and the ethics of AI-mediated content. And they leave with a transferable problem-solving approach — the full EDIT Design Thinking® cycle — that applies to complex challenges well beyond school. C-Academy’s competency assessment approach measures growth across all these dimensions, not just activity completion. Meaningful systems design at the curriculum level requires that kind of outcome clarity.

Schools interested in bringing the Human Futures programme to their students can enquire through the C-Academy contact page at https://c-academy.org/contact-us/. The programme is available as a half-day taster (3–4 hours), a one-day sprint (6–7 hours), or the full six-session learning journey of approximately 18 hours — scoped to fit enrichment calendars, post-examination periods, or interdisciplinary programme tracks.

—

[Links]

- Human Futures AI Design Thinking Workshop → https://c-academy.org/let-out-your-creativity/ai-design-thinking-workshop-singapore/

- EDIT Design Thinking® methodology → https://c-academy.org/design-thinking-course-singapore/

- C-Academy’s sustainability design thinking workshop → https://c-academy.org/let-out-your-creativity/sustainability-design-thinking-workshop/

- Reimagining Learning Spaces workshop → https://c-academy.org/let-out-your-creativity/reimagining-learning-design-thinking-workshop/

- About C-Academy’s facilitators and credentials → https://c-academy.org/about-us/

- Contact C-Academy about school programmes → https://c-academy.org/contact-us/